Introduction

Whenever I need to put even a simple application together there’s always a whole bunch of infrastructure I need to put in place. For a WPF application this can include:

- IoC Container

- Testing Framework

- MVP / MVVM Framework

- Logging

- ORM

This was recently the case when putting together the PDC downloader. So I’m going to put a quick post around each of these areas. Besides the UI Framework everything else should convert over to ASP.NET without too much difficulty.

There is going no be no real example as I don’t want to complicate the solutions. Each solution will contain the minimum code to get the relevant area up and running, with the smallest example I can give. Once you have things going, Google will provide more advanced help.

Part 1: Testing Framework

The first think I end up needing in any solution is a testing framework, and currently my framework of choice is NBehave, a superb framework for writing any tests be it TDD or BDD.

http://nbehave.org/

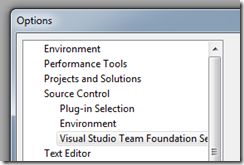

In my case I’m using MSTest; I prefer Gallio/MBUnit but they current don’t work so well in VS2010. In any case, after creating a new C# project your referenced assemblies should look something like this:

So we are ready to put together our first spec! The example will contain a little bit of rhino mocks to show the integration NBehave has put around it.

So let’s write our first BDD Story.

protected override void Establish_context()

{

this.Story = new Story("writing our first nbehave spec");

this.Story

.AsA("person new to nbehave")

.IWant("a simple example")

.SoThat("I can better understand how to use it");

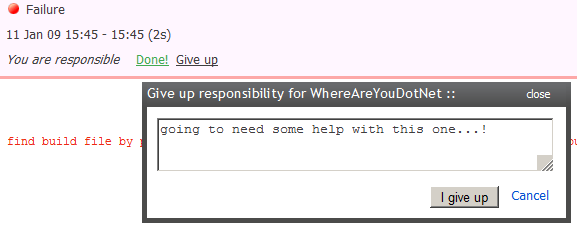

}This story doesn’t actually do anything, however if we ran the NBehave tool we would have that output together with the scenarios. This way when a test fails, you know exactly which business functionality has been affected.

[TestMethod]

public void APlainOldNBehaveSpec()

{

SillyPOCO sillyPOCO = null;

string junglegon = "Junglegon";

Story.WithScenario("a plain old nbehave spec")

.Given("an object we are going to test",

()=> sillyPOCO = new SillyPOCO())

.When("we set a property on that object",

()=> sillyPOCO.Name = junglegon)

.Then("we should have changed that object",

()=> Assert.AreEqual(junglegon, sillyPOCO.Name));

}There’s our first NBehave specification. It’s pretty straightforward, and I particularly like how easy it is to read when the Given, When and Then clauses are accompanied by a well written scenario. You might ask whether or not this why of testing scales when testing complicated business functionality or frameworks, and I can say it does. Even better than just working, it forces you to improve the quality of your test code to make it more readable and less bulky which is what my workmates and myself experienced on our current project.

Anyhow, let’s take a look at testing with rhino mocks, we are going to add some pointless dependacy properties to out silly object to test the interactions using mocks.

[TestMethod]

public void AnNBehaveSpecWithMocking()

{

SillyPOCO sillyPOCO = null;

Story.WithScenario("a plain old nbehave spec")

.Given("an object we are going to test",

() => sillyPOCO = new SillyPOCO())

.And("the object has a silly service",

()=> sillyPOCO.SillyService = CreateDependency<ISillyService>())

.When("we call a method on the object",

()=> sillyPOCO.TalkToService())

.Then("the service should have been called",

()=> sillyPOCO.SillyService.AssertWasCalled(service => service.Chatter()));

}NBehave provides a CreateDependency method as a wrapper for creating a mocks. When making your expects and asserts, you can use the rhino mocks extension methods as a simple of way checking things happened the way you expected.

You can find the code for the examples at the bitbucket repo:

http://bitbucket.org/naeem.khedarun/projectpit/overview/

The samples for this part are under a tag called partone. Simply get the repository and update to that tag.